Right Content

The Buyer's Guide to Learning Content

TLDR: Content has become a commodity. Yet the best of it remains essential for learning, development and workforce planning. Finding the right content is harder and more important than ever. But there are now better ways to decide on the right content for your organisation and to get the most out of it for your workforce. The content itself holds much of the data you need, and algorithms can make it intelligible for you and your workforce.

Once upon a time, content was king. This prophecy was foretold by Bill Gates twenty five years ago. Over the next 20 years, as ad spend fuelled an explosion of digital media and blogging and social media democratised content distribution, that prophecy proved true. It materialised on the web (Facebook, YouTube, Twitch, Google) as well as in L&D (Lynda.com, Skillsoft). But in L&D for the past few years, it’s been technologies, platforms, skills and new-fangled concepts like talent marketplaces that have held the industry’s attention.

Potshots at contiguous ideas are common: ‘content doesn’t build skills’, that we need ‘resources, not courses’, that ‘measuring content consumption is pointless’, or that ‘content has become a commodity’. There is some truth in this kind of thinking. Now, it’s the content consumer that’s king and queen. But just as with the wider web, content still reigns over our time, attention, and memory. And it makes its creators money.

As an L&D professional in charge of providing learning content, that’s money that you have to spend out of your budget. You’re under pressure to make sure it’s the right content for your organisation, its people and the skills they need. Here’s how you can do just that.

As an L&D professional in charge of providing learning content, that’s money that you have to spend out of your budget. You’re under pressure to make sure it’s the right content for your organisation, its people and the skills they need. Here’s how you can do just that.

Does your workforce have the right content to develop the right skills? How would you know?

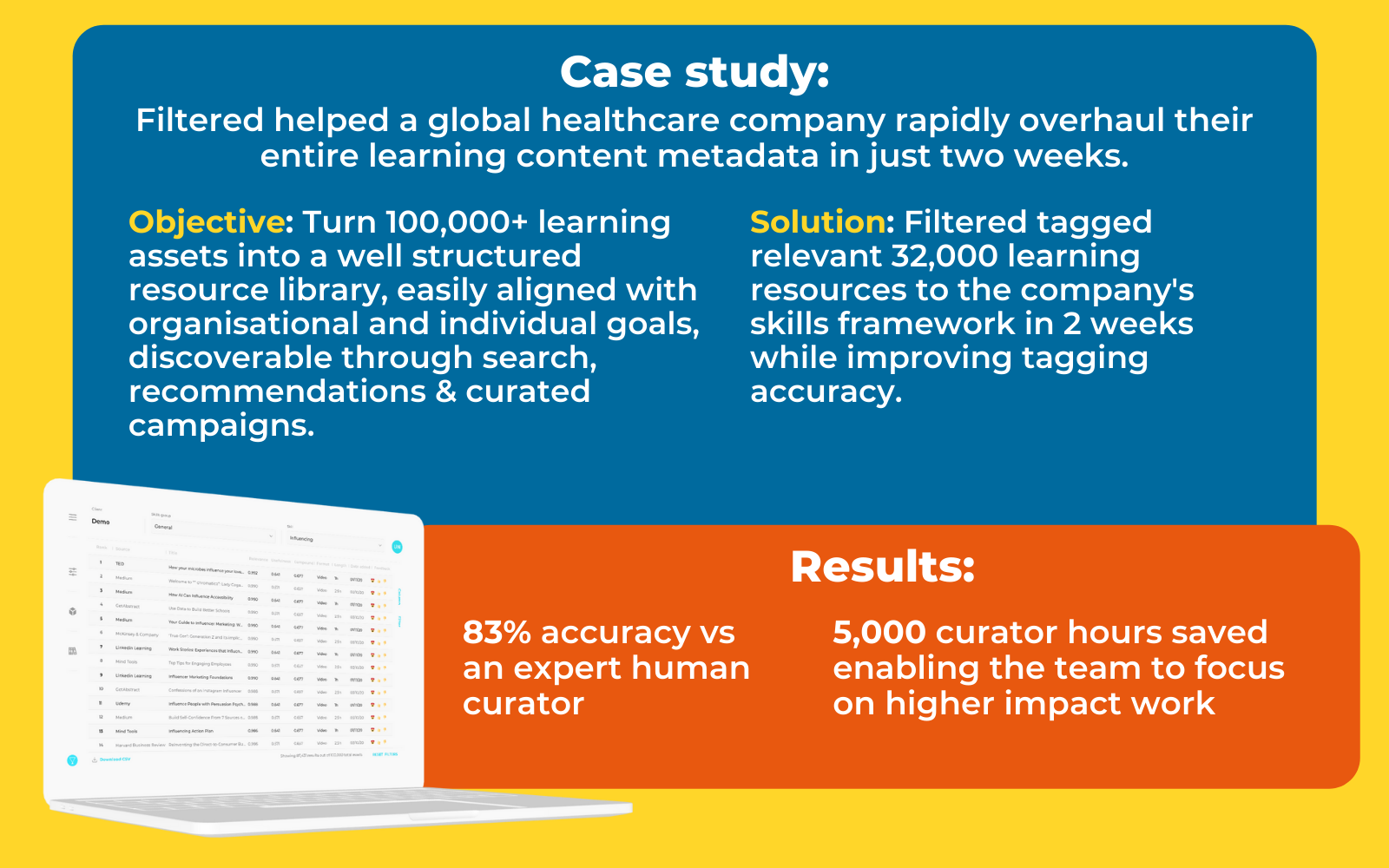

We’re often asked to answer these questions for clients. Not as a platform provider, though we have a platform. Not as a content provider, though we have content. We are asked because we spend all our time thinking about what ‘right content’ means - many years of human contemplation combined with billions of algorithmic calculations. It’s why we called our company Filtered - everything we do is about filtering the right content, for organisations and individuals.

From what we’ve seen, most organisations are only at the beginning of their journey to the right content. It’s such an important journey. Large organisations spend millions of dollars, pounds and euros on content libraries. They spend millions more creating proprietary content. It works out at about £50 per person per year, including smaller organisations. In total, this sustains a £40-100bn market for learning content - every year. And that sum is increasingly supplemented by individuals investing their own cash in personal skills development.

But are these institutions and individuals making sound investments?

There is just so much content that most find it hard to tell. During the sales pitch, many of the popular libraries highlight they have tens of thousands of learning assets for employees to access. There are aggregators like Go1 and Open Sesame with hundreds of thousands. Then there are the millions of assets that workforces generate themselves (corporate user-generated content). And there’s the almost unmeasurable swathes of material accessible on the internet (Google’s most recent estimate has it at around 130 trillion pages). Most large companies end up with easily more than 100,000 learning assets at their disposal.

Yet for all this learning content, there’s very little data. Learning assets are not like songs on Spotify or TV series on Netflix or videos on YouTube. They don’t have the same universal appeal and so they don’t get the same airtime. In fact, the majority of the learning assets on your corporate servers are barely touched at all. There’s a very long tail of material that never sees the light of day, much of which goes stale as regulations and industry best practices evolve.

Consequently, usage data for this unused content is tiny, and what little there is stays behind corporate walled gardens. Usage at one organisation of HBR’s piece on timeboxing, for example, is not shared. As we’ve discussed with Josh Bersin, there’s no Rotten Tomatoes or IMDB of learning content, where our collective usage and experience data is shared and from which reliable insights can be drawn.

Consequently, usage data for this unused content is tiny, and what little there is stays behind corporate walled gardens. Usage at one organisation of HBR’s piece on timeboxing, for example, is not shared. As we’ve discussed with Josh Bersin, there’s no Rotten Tomatoes or IMDB of learning content, where our collective usage and experience data is shared and from which reliable insights can be drawn.

So if there’s so much to choose from and so little on which to base the choice, there’s a large margin for error - for sizeable, continual mistakes. And indeed, sizeable mistakes are continually made.

But this guide for curious, ambitious, determined content buyers has positive light to shed! Content itself is, after all, just data. There are trillions of data points in learning content itself. Each word, each sentence, each juxtaposition of a paragraph is data that algorithms can make sense of, if we know how to configure them, apply them and interpret their results. There is plenty of hope!

For this guide to be most useful, we’ll need to lay some groundwork in describing and discussing some of the fundamental elements in all this: content, skills and tags. In a nutshell, skills are the common currency as we use them to understand people’s ability to complete their roles and tasks on the one hand, and learning content’s potential to increase that ability on the other. Tags are the means by which we codify and scale that understanding.

The benefits of finding the right content are massive. We think of five benefits that come from taking the kind of approach advocated in this guide:

- Identifying and building the most important skills for your organisation

- Increasing availability of learning metadata

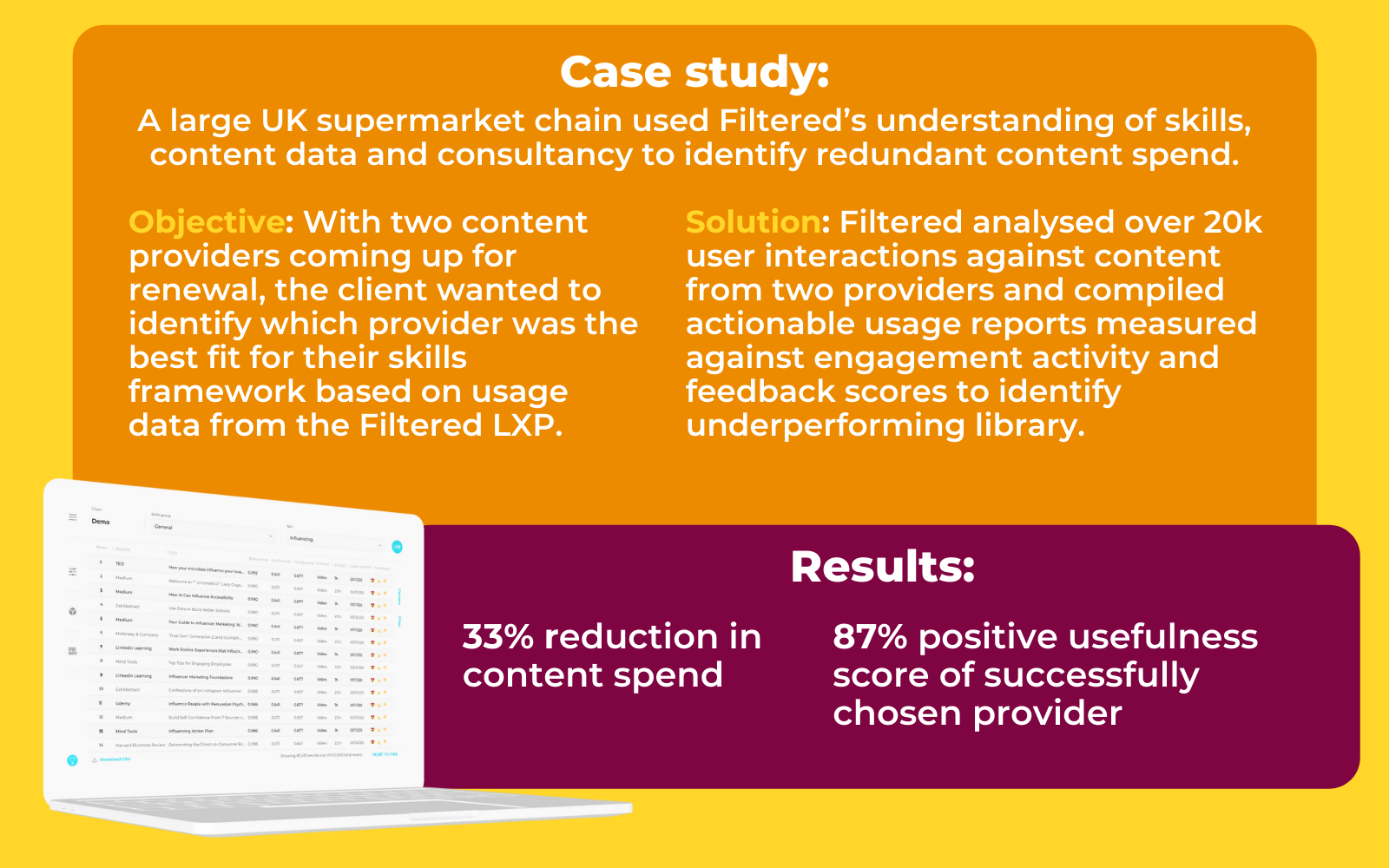

- Cutting unnecessary content spend

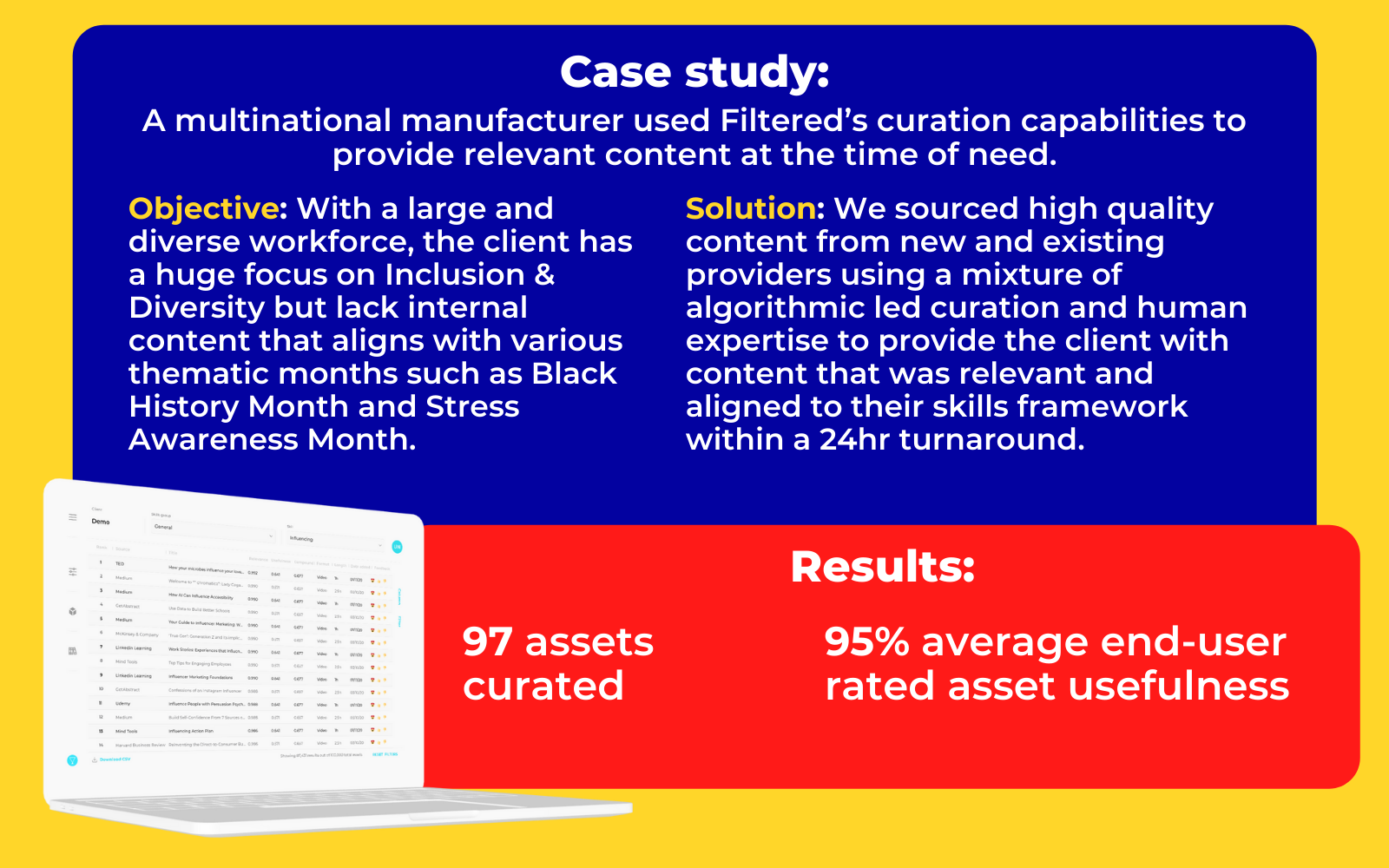

- Curating the best content you don’t already have

- Improving discoverability and user experience for your end-users

Three kinds of learning content

Others have written about different but related pools of content. Donald Taylor (a Filtered non-executive director) wrote about six kinds of content back in 2013:

We see it the same way, only we group the top three categories into one, which we call ‘Proprietary’:

We see it the same way, only we group the top three categories into one, which we call ‘Proprietary’:

Proprietary content is the kind your organisation creates itself. This has a bunch of interesting characteristics. It’s written or recorded by your own people. No other company has it. It’s the most relevant, most specific material to train and upskill your workforce. But it’s not all good news. Proprietary content tends to go out of date fastest and generally has the lowest-quality metadata by far - wrong or obsolete or, most often, simply missing. Since it doesn’t exist anywhere else it’s harder to benchmark it against content from elsewhere.

Generic library content is the type you pay for a number of licences to, along with many other companies that pay for such access. Of course, the full list of providers runs into the thousands (and so the full list of assets runs well into the millions), but here is one way of grouping them up, along with examples of the players we encounter most commonly:

- General-purpose: Skillsoft, LinkedIn Learning, Virtual Ashridge

- Format-focused: getAbstract, HBR, McKinsey

- Market places: Udemy

- Aggregators: Go1, Open Sesame

- MOOCs: FutureLearn, EdX, Coursera, Udacity

- Specialists: Pluralsight

- Web crawlers: AndersPink, OfCourseMe

Then there’s web content. For most domains of knowledge, understanding and skill, the best of the web is better than any other content. On the web, consumer is queen, and there are now almost 5 billion consumers actively using the internet. The prize of immense traffic attracts brilliant creators to create brilliant (or at least brilliantly effective) content. And the best at answering consumer questions is what rises to the top of search results pages and news feeds.

Most of the web may not be very good, but the very best is sublime.

I recently listed and described six publicly accessible online learning assets which have made an appreciable and enduring difference to how I think and behave at work and in life. You have an equivalent list, whether you call it to mind or not, and the same is true for each person in your organisation. There’s a sense in which the entire role of L&D is to help accelerate the growth of lists like this for our colleagues.

Learning is most potent when it draws from the widest pool of human experience. Imagine you want to help your teams present more effectively. There are courses and articles and videos about this and they may be helpful. Thinking a little beyond learning might get you to some outstanding speeches - actual exemplar performances (like this one, complete with a commentary and analysis), rather than obvious, how-to teaching material. If we were bolder and more imaginative still, we might include some ineffective presentations by high-profile figures - this can help colleagues battle confidence issues and imposter syndrome. If we were to really push the boat out, we might even point our colleagues to, say, successful, young Twitch streamers (such as Ludwig) who have developed large followings with an array of innovative, highly un-corporate techniques to talk to their audiences, live and uncut.

Taking the best content from the three buckets outlined above gives us the raw material to provide transformative learning experiences. But how do we even identify the best in the first place? It starts with skills.

Taking the best content from the three buckets outlined above gives us the raw material to provide transformative learning experiences. But how do we even identify the best in the first place? It starts with skills.

The state of skills

Everyone’s talking about skills, upskilling and reskilling. So are we. The world changed dramatically at the start of 2020, shaking up priorities, job roles and entire industries. The skills required of the global workforce changed accordingly. And so we in L&D have needed to move quickly, and keep moving.

Skills are the new currency of work. People say this a lot. What do they mean? I think there are two meanings to it. The first is that they’re simply more important, given the economic turbulence. The second is that skills are relevant all the way along the employee lifecycle. Skills are listed in job ads, job descriptions, CVs and resumes, LinkedIn profiles, appraisals and reviews, as well as learning content.

Today, that currency is imperfect and inconsistent. But if more of us use consistently defined skills, more people will be able to find the roles they are most effective at - and happy and fulfilled when - performing.

Skills data and frameworks

There are two key questions about skills: exactly which skills do we need and exactly what do we mean by each of those skills?

The first question, when translated into L&D-speak, is about skills frameworks aka taxonomies or ontologies. In other words, what are the skills that I should emphasise at my organisation? And on what basis?

Designing a skills framework can be a deeply involved process. It can also be a simple, straightforward and quick one. We help companies in both ways.

Complex

This route - which most large companies tend to take - is ambitious. Proponents of this approach attempt to draw on multiple data sources:

- Job families

- Standardised job/role descriptions

- Industry trends

- Future skills

- Current and future business priorities

- Departmental and functional preferences

- Third-party job role data

- Hierarchies

- Career mobility

...and more, in order to build a future-proofed taxonomy. The process takes many months and can take longer. At the end of it though, if it’s done well, the framework should serve your company and its workforce for several years.

Simple

The other school of thought is that there’s a broad commonality between companies and industries when it comes to skills. 80% of those skills are transferable, so why not just take a pre-existing taxonomy and tweak it rather than build from scratch? That might be just 20 or 50 skills (the average is ~60). Sure, that won’t cover absolutely everything, but it will do a lot. Specific departments can cover the really nuanced skills.

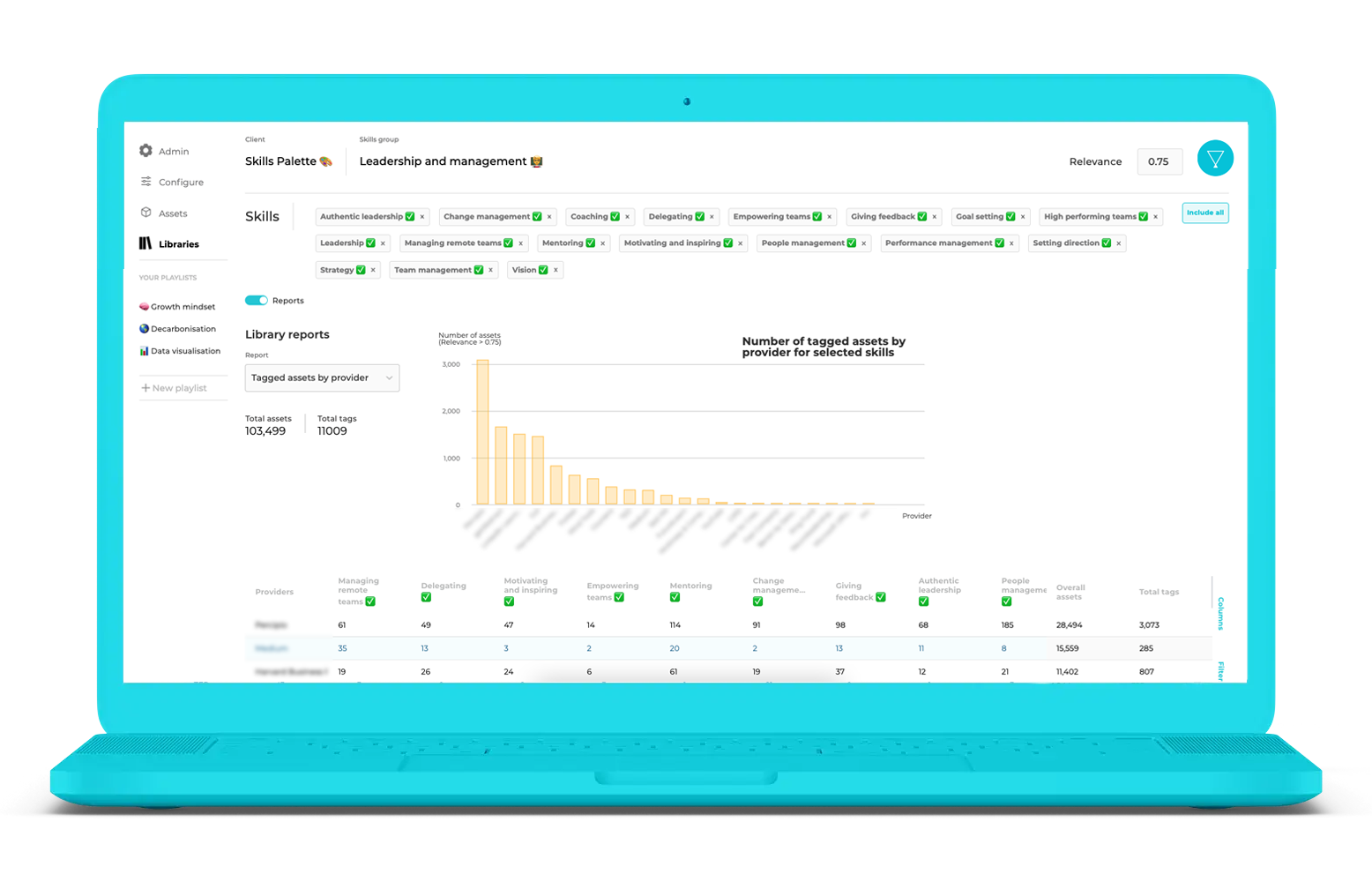

Both have their merits. We’re more inclined towards the second. Reviewing, revising and advising on the skills frameworks of dozens of organisations has led us to this view. Organisations have at least 80% in common. That has led us to developing our own Skills Palette, of ~100 skills, for companies that want to take this simpler approach.

For each segment, choose which of the skills you want. Tweak the definitions to reflect the nuance of your organisation (for example: do you want to have Sleep Management in Resilience? Should Difficult Conversations be in Teamwork or People Management or both? Is Excel part of the Microsoft set or part of Decision-Making?). Add anything that’s vital and missing. And you’re done, in just a few days.

Regardless of approach, an effective, future-proofed skills framework can be the glue between corporate strategy and the organisational capabilities required to fulfil it. As the strategy evolves, so too should the skill framework which serves it. So the creation of a high-quality skills framework is of strategic importance to the growth of organisations (and can raise the profile of those L&D functions that commission and manage them accordingly).

Regardless of approach, an effective, future-proofed skills framework can be the glue between corporate strategy and the organisational capabilities required to fulfil it. As the strategy evolves, so too should the skill framework which serves it. So the creation of a high-quality skills framework is of strategic importance to the growth of organisations (and can raise the profile of those L&D functions that commission and manage them accordingly).

The importance of tagging content

The concept of tagging (aka classification, categorisation) understates the importance of the practice. As Daniel Levitin says in The Organized Mind:

Productivity and efficiency depend on systems that help us organize through categorization. The drive to categorize developed in the prehistoric wiring of our brains, in specialized neural systems that create and maintain meaningful, coherent amalgamations of things.

Good tagging is an essential enabler of effective information exchange and of effective learning. And almost no one is getting it right.

Suppose you have now arrived at a workable skills framework. How do you then make the best use of the different kinds of content to improve those skills for your workforce?

A foundational step here is tagging all of that content with one or more of those skills. This sounds simple, but when you have to scale to hundreds of thousands of assets with different kinds of data and metadata with each...complexity emerges. Many of the tags we see in organisations we work with are poor, exhibiting one or more of the following characteristics:

- Missing - tags and other metadata are just not present at all.

- Wrong - tags which do not accurately represent, indeed misrepresent, the learning asset.

- Imprecise - tags which are accurate (not wrong) but imprecise (not specific).

- Unindexable - the tags may be present, correct and precise but they still won’t be helpful to you and your organisation if they’re not in the terms of the skills framework that you’ve settled on.

Winning at tagging content

The good news is that algorithms were made for this kind of big-data-narrow-scope task. At Filtered, we have developed our own algorithms which apply skills and other tags rapidly and reliably. We only need ~25 words of data to be up and running, and require zero training data. Zero.

The good news is that algorithms were made for this kind of big-data-narrow-scope task. At Filtered, we have developed our own algorithms which apply skills and other tags rapidly and reliably. We only need ~25 words of data to be up and running, and require zero training data. Zero.

That means we can take, for example, 10,000 proprietary learning content assets, each with very little metadata, and tag them all in the time it takes a human curator to make a cup of tea.

We strongly recommend that you ask vendors that claim to be able to achieve these kinds of ‘AI-driven’ results for some sample tagging output from some of your own data inputs (e.g. a handful of skills you deem important and some sources of content that you have or are considering purchasing). At Filtered, we are happy to provide that.

All of this impeccably tagged content against your skills framework allows you to make decisions about which of it is the right content. And that’s especially important when you’re about to invest in new libraries.

Never enter commercial conversations with content vendors unarmed. Most will offer the biggest library possible, or the most content in the world. We advocate the opposite approach - a less-is-more, filter! philosophy. Having loads of content doesn’t mean it’s the right content; indeed, the truth is closer to the reverse of this.

Relevance

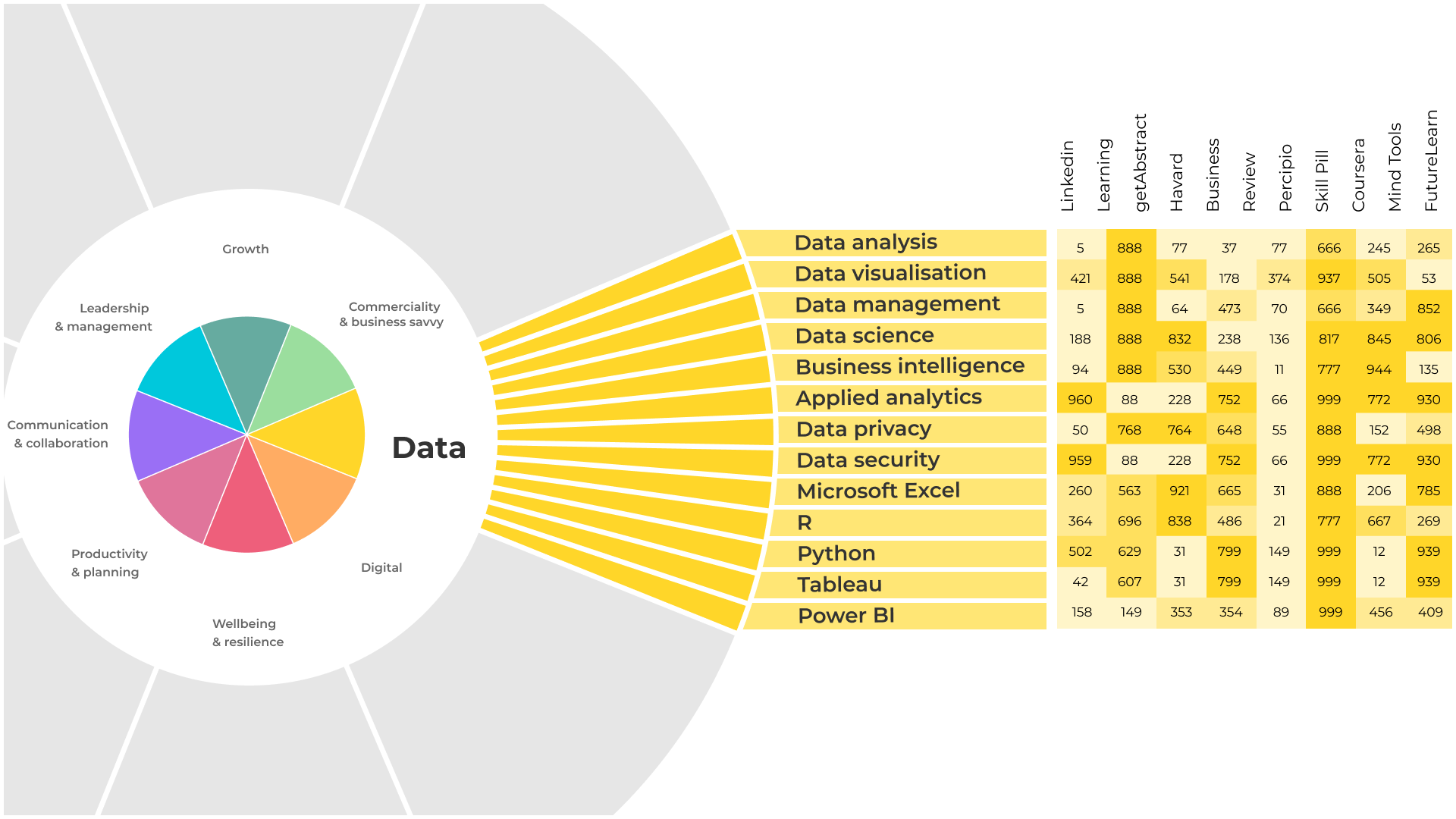

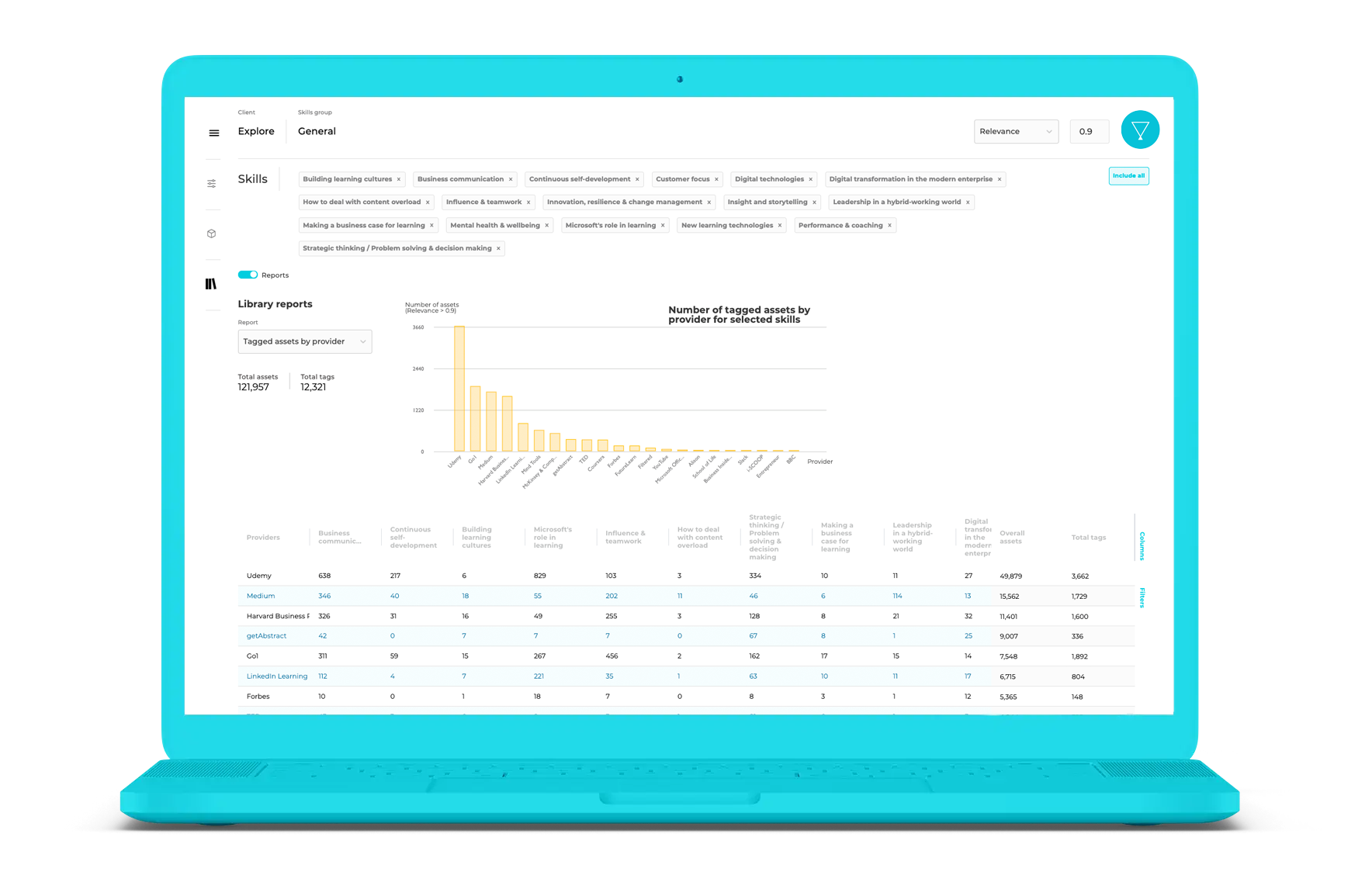

Having established your skills framework, the first buying question is how much of each of these libraries is relevant to those skills? The vendor may be able to give you a sense of this from the native tags they have. Or you can take a fast and more objective algorithmic approach like that of Filtered’s Content Intelligence, which can summarise the most valuable libraries for your specific framework:

By entering your skills into Content Intelligence, either from the palette or your own framework, you get an instant appraisal of exactly which library or combination of libraries deliver the best bang for your buck. And that can include existing libraries, showing which are already adding value (or need to be dropped, saving money).

Either way, it’s a vital first step. You should populate a table of skills x vendors with the number of relevant assets by each vendor, for each skill in the cells of the table. That’s an important milestone in the decision-making purpose as it’s a way of lining up vendors side-by-side and evaluating them in terms with a single, comparable metric: relevance. And it gives you data-based ammunition for procurement conversations.

All the other considerations…

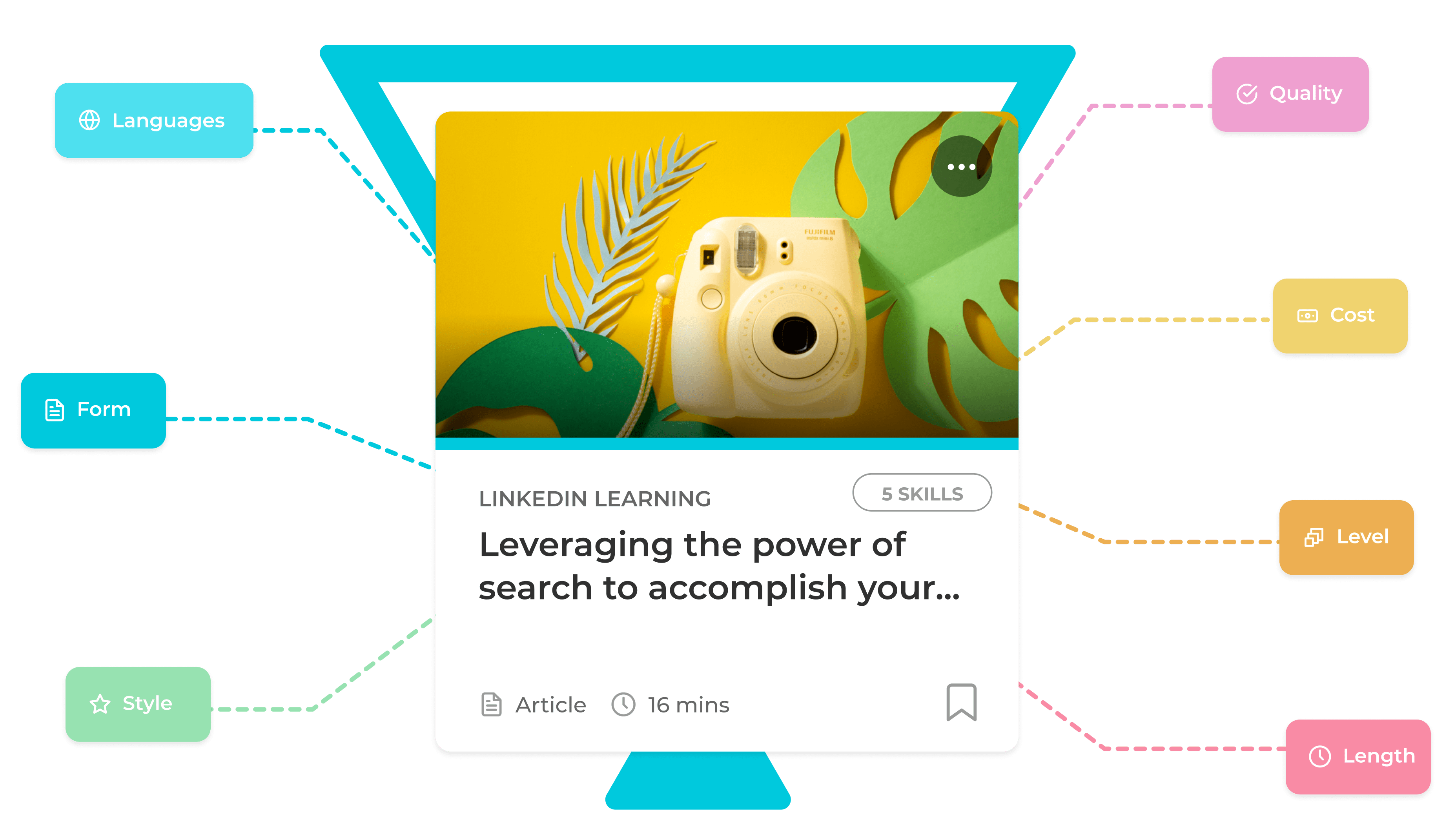

After relevance, comes everything else. The list of other considerations will vary from organisation to organisation but in our experience, these seven are the most important for our clients, roughly in decreasing order of priority:

- Format*

- Languages*

- Cost*

- Quality

- Length*

- Level*

- Style

A crude but tried, tested and easily explainable process from here on in is to weight each of these factors and award a score for each factor, for each library. The asterisked items are where the vendor should be able to provide plenty of information. If they can’t or won’t, perhaps the vendor shouldn’t make your shortlist.

The remaining two items - quality and style - are far more subjective. Vendors - including us - will have some data to help here (such as, in our case, a user-generated usefulness score for a lot of providers). And you may have some data on completions or anecdotal feedback to bring to bear. But ultimately, you will need to offer an opinion on these, as they pertain to your organisation and your industry.

Then, compile all of your data and you can start to determine...

Value for money

Knowing the relevance and general strength of a library is one thing. But how do you establish a link between your library rankings and how much they each cost? Value for money is different from raw cost and takes several factors into account.

The first is about deal length. The big, powerful content vendors insist on multi-year deals. At the other end of the spectrum, many smaller vendors are willing to offer free or inexpensive pilots. Not only might such pilots offer you a negotiating angle, the pilot might be interesting. Your workforce is much less likely to have encountered this kind of content so the experience may be refreshing and the feedback illuminating (eg salespeople love book summaries; a web curation service beats library content on engagement; actually short-form content is not as well-recieved as we had thought…).

The first is about deal length. The big, powerful content vendors insist on multi-year deals. At the other end of the spectrum, many smaller vendors are willing to offer free or inexpensive pilots. Not only might such pilots offer you a negotiating angle, the pilot might be interesting. Your workforce is much less likely to have encountered this kind of content so the experience may be refreshing and the feedback illuminating (eg salespeople love book summaries; a web curation service beats library content on engagement; actually short-form content is not as well-recieved as we had thought…).

A second perspective that might help is the notion of a cost per qualifying asset (CPQA). As vendors boast thousands or tens of thousands of items of content on which your workforce is invited to gorge on an all-you-can-eat, SaaS basis, you counter with an argument by filters. Sure we start with 20k assets. But only 5k are relevant to our skills. And of those, only 4k are of a preferred format type. We understand that only half of the rest is in all the languages we need, leaving, say, 2k. And of those, 1,500 are at too basic a level for my organisation. So only 500 are left. Your price is £50k per year which makes this £100 per asset per year. How does this sound to you, first of all? And then put it to the content vendor - does this give them grounds to offer a small or large reduction, for you, a formidable, data-literate, commercially-astute buyer?

Content Intelligence can automatically calculate CPQA for your target skills and libraries.

Thirdly, web-based alternatives. We said above that the best of the web beats any library in terms of quality. The counterargument from the content providers is that you can’t be sure of quality, consistency or cohesiveness. There’s some truth in that. Indeed, the worst of the web is worse than any library too! But the truth is that libraries are also vulnerable to criticisms about quality and cohesiveness. And subject to usage rules (and the costs of curation) the CPQA of the web is zero.

Thirdly, web-based alternatives. We said above that the best of the web beats any library in terms of quality. The counterargument from the content providers is that you can’t be sure of quality, consistency or cohesiveness. There’s some truth in that. Indeed, the worst of the web is worse than any library too! But the truth is that libraries are also vulnerable to criticisms about quality and cohesiveness. And subject to usage rules (and the costs of curation) the CPQA of the web is zero.

Skillsoft vs LinkedIn Learning

These two companies still dominate the generic corporate learning content space. Both have been around for a couple of decades (in the case of LinkedIn Learning, most of that time was as Lynda.com), both have many hundreds of large corporate clients and thousands of clients in total. They also both have large, tenacious sales teams. And there’s huge overlap between the two. Yet we see a lot of large companies paying for both libraries. Is that worth a seven-figure investment, recurring every year? Maybe it is: working with both Skillsoft and LinkedIn Learning guarantees huge breadth and association with two of the biggest brands in the industry. What’s new and now possible is to quantify that overlap and make proactive, data-informed business decisions, rather than leaving it to inertia and what’s gone before.

To those organisations with both Skillsoft and LinkedIn Learning, do you need both? (We can provide some guidance here).

Learning technology ecosystems

This is the point where we need to acknowledge the important role that technology – and in particular what we in the learning community would refer to as the learning technology ‘ecosystem’ plays (both good and bad) in surfacing content for learners.

This is the point where we need to acknowledge the important role that technology – and in particular what we in the learning community would refer to as the learning technology ‘ecosystem’ plays (both good and bad) in surfacing content for learners.

You might not consider your organisation to have a learning ecosystem but inevitably, you will have one of some form regardless of whether it has been strategically designed or not.

Your ecosystem may have a mix of technologies such as:

- An LMS or Sharepoint for your proprietary content

- External cloud services for your library content

- Web browsers and firewalls that enable access to web content

All of these technologies can hinder as much as help users get to content in a timely manner. For example, is your firewall blocking access to useful content? Try that link we added earlier in the paper to Ludwig on Twitch – many large enterprises block that site, despite it holding useful learning content. Many social networks such as Twitter and YouTube started off being blocked at corporates and eventually opened up once they were found to be useful for learning.

If users have a problem to solve, are you relying on them to know where to find the answer? Should you be providing an enterprise search feature that will allow them to search for tagged content across your LMS, Sharepoint, external libraries and the web? Just how do you stitch this all together? All too often, learners don’t know where to start – presented, as they often are, with a mish-mash of poorly stitched-together learning channels – and so either don’t bother or access their information from non-validated sources (colleagues or youtube). That’s not necessarily a bad thing but neither is it optimal. Single Sign On (SSO) can help but it’s often not the panacea in creating a relevant and seamless learning experience, especially if learning channels and content have not been sufficiently mapped to skills needs (or each other!) and there is uneven look, feel and usability

And from an L&D, IT and Procurement perspective, the web of content optimisation, hosting, account management and (re)-procurement required to support a poorly performing ecosystem can and often does feel like managing spaghetti.

For all of these reasons, a well-designed and expertly implemented learning ecosystem becomes crucial, with manifold benefits in terms of both learning effectiveness and resource (time and money) optimisation.

For all of these reasons, a well-designed and expertly implemented learning ecosystem becomes crucial, with manifold benefits in terms of both learning effectiveness and resource (time and money) optimisation.

As with many things in life, knowing where to start is often the most challenging part. Many learning leaders know that they need to build an effective learning ecosystem that engages learners with a seamless and personalised learning experience but quickly get caught up in the weeds of not knowing:

- Which direction to head in?

- What are the right activities and in what order?

- Whether you are about to spend two years and a lot of money going down a rabbit hole with limited business impact.

To mitigate against these, it is imperative to understand the broader human and organisational ‘ecosystem’ that the learning technology ecosystem will be part of. But where to start? In our experience, there are 5 core consideration areas that necessitate understanding before embarking on your learning ecosystem journey:

1. Culture and Employee Experience Considerations

- What does your current employee experience look like; how do you engage and communicate with your employees?

- What motivates your employees to learn and how do they like to ‘consume’ their learning? How does this differ between learner types or ‘personas’?

- Are your learners experienced and proactive in navigating their own learning journeys? Is this the same across the board? What can be done to learn from ‘strong’ learners (individuals, teams, communities) and put more scaffolding around those that require it?

- More fundamentally, Has Learning been embedded into the culture of the organisation? Do you know what good learning behaviours look like? And are those in ‘high-influence’ roles consistently exhibiting them?

2. Business Considerations

- What are the organisation's strategic objectives, the People strategy and where does learning fit into this?

- Is there a clear line of sight between the organisational strategy, strategic people/workforce plans, your skills and learning needs and your content optimisation and learning ecosystem strategy?

- Is there a strong understanding of the costs of investment in learning (and learning technologies) and the required return on that investment in terms of a desired impact on key organisational performance indicators? What would be considered a sufficient RoI and how would you know you’ve realised it?

3. Career and Skills Development considerations

- What does a career development path look like for an employee?

- What are the current career pathways in place and are they underpinned by a clear Job Family Architecture (JFA) and skils frameworks?

- What does your content strategy (internal, external sources, user-generated content) look like?

- What leadership and management programs and learning programs do you currently have in place to support career growth?

4. Technology and Software Consideration

- What does your current learning technology infrastructure look like and does it align to the HR Technology Ecosystem?

- What current software do you use in learning to deliver experiences for employees?

- Where do your learners go to learn and are they using resources outside of your ‘official’ learning ecosystem

5. Operational Considerations

- How do you currently administer and manage learning within your organisation?

- What is the current team structure relative to learning experience and technologies and does it operate effectively and deliver excellence?

- What are the respective roles for L&D, IT and Procurement stakeholders?

- Is the right financial framework in place to support your learning ecosystem

- Do you have the right mapping, processes and policies in place to deliver operational efficiencies?

Whilst the above is not a definitive list; having a solid understanding of these will help you to identify, deliver and deploy the most effective learning ecosystem for your employees, which supports the right experience at the right time, in the right way.

It's also worth noting that you can capture these outputs in a 'Functional Requirements List'. The list should not be a technology list, more a 'what we want employees to do' and what you need to put in place to deliver this. By logging requirements systematically and transparently, learning leaders can start planning for the future in terms of the changes you want to make for each area over time, thus informing a learning ecosystem development roadmap.

It's also worth noting that you can capture these outputs in a 'Functional Requirements List'. The list should not be a technology list, more a 'what we want employees to do' and what you need to put in place to deliver this. By logging requirements systematically and transparently, learning leaders can start planning for the future in terms of the changes you want to make for each area over time, thus informing a learning ecosystem development roadmap.

This discipline would sit beneath any effective IT systems integration yet in learning technology decisions are often taken in a more ad-hoc and organic way. We think it’s time for a change.

Buying your content is a major investment and an important milestone. But it’s only the beginning of the journey for your workforce who will go on to use and enjoy that content over the months and years ahead. It’s not within the scope of this guide to describe how to make the most of a LMS or LXP for learners. But we will pick out some considerations that we’ve found to be especially valuable, in the application of both Content Intelligence and Filtered’s own LXP.

Buying your content is a major investment and an important milestone. But it’s only the beginning of the journey for your workforce who will go on to use and enjoy that content over the months and years ahead. It’s not within the scope of this guide to describe how to make the most of a LMS or LXP for learners. But we will pick out some considerations that we’ve found to be especially valuable, in the application of both Content Intelligence and Filtered’s own LXP.

Learning content curation criteria

Make your content even more relevant. Go beyond skills. Make your generic content as bespoke to your people, your organisation and your industry as you possibly can. A big part of that will inevitably be skills. But think about what else your intended audience will care about. Combine skills with some of the factors below to get to a smaller set of material which will go down better and make more of an impact than lists of endless, generic content.

- Industry - A skill like project management can be taught, to an extent, with generic material. But if the examples from the content are from your industry - perhaps some are even taken from your main competitors - they will land better and bring stories that people at your organisation will want to hear and retell.

- Business function - Much like industry, a generic skill couched in the style and language of and drawing on examples from the relevant business function (eg marketing, digital marketing, HR, finance) makes greater impact.

- Durability - Some organisations insist on recent content. While some material is perishable (e.g. technologies which are not used anymore) other content can be evergreen. Partly because it has stood the test of time, partly because it makes the point better, with bigger impression, or even a degree of poignancy. See RedThread’s excellent piece on perishable vs durable (aka evergreen) content.

- Gender-related content - Some organisations prefer to remove material that is directed at a particular gender. There are many reasons for doing this and many reasons not to. But whichever way you choose to go, go intentionally, with an awareness of what’s possible. Note that there are at least two issues here - what the content is about and who it’s written by. Both, or neither, or just one might be more important for your intended audience.

- Race - Everything discussed in the previous point applies here. There’s an extra complication because the race of authors and experts behind learning content is harder to account for in data than gender to make sure of diverse and inclusive representation.

- Popularity - Proceed with caution. If this is your bellwether for good content, you’re at risk of placing your entire workforce in a filter bubble (read this prescient piece by the brilliant Eli Pariser) and supporting narrow-mindedness and uncreative thinking. On the other hand, there is some popular content that it’s useful for us all to have an awareness of.

- Depth and format - For example, is this a deep dive into machine learning or an article to build awareness of the possibilities of ML? The former may be a first step towards a major career change. The latter is much lighter. You’ll need to strike the right balance of each. And how is it formatted? Some prefer structured content with formal references, others prefer a three minute bitesize video.

- Geographies - Much of what was said above about industry and business function applies here too. There’s an extra connection if the geographies from which examples are taken are closer to your organisation’s home, be that USA, EMEA, Alaska, Mongolia or Peckham.

- Controversial issues - Content which even mentions topics such as: abortion, the death penalty, expletives, political sensitivities, social sensitivities, religion. Most large companies want to steer well clear here. That’s understandable but it also takes out a lot of rich and potentially rousing content. As with some of the other items above, know your audience and use your judgement to make an intentional call here.

- Current affairs eg climate, sustainable energy, diversity, culture, politics - None of these are skills. But an awareness of the issues at play may be directly or indirectly useful for many people in your workforce.

With many of these, intelligent, skills-focused NLP algorithms can hugely accelerate the curation process so that within a few minutes you have a small, manageable subset of content which is fit for the skills and behaviours and awareness that your organisation wants to see more of in your colleagues. Your commentaries, exercises, pathways and campaigns will be all the more nourishing and appreciated for this.

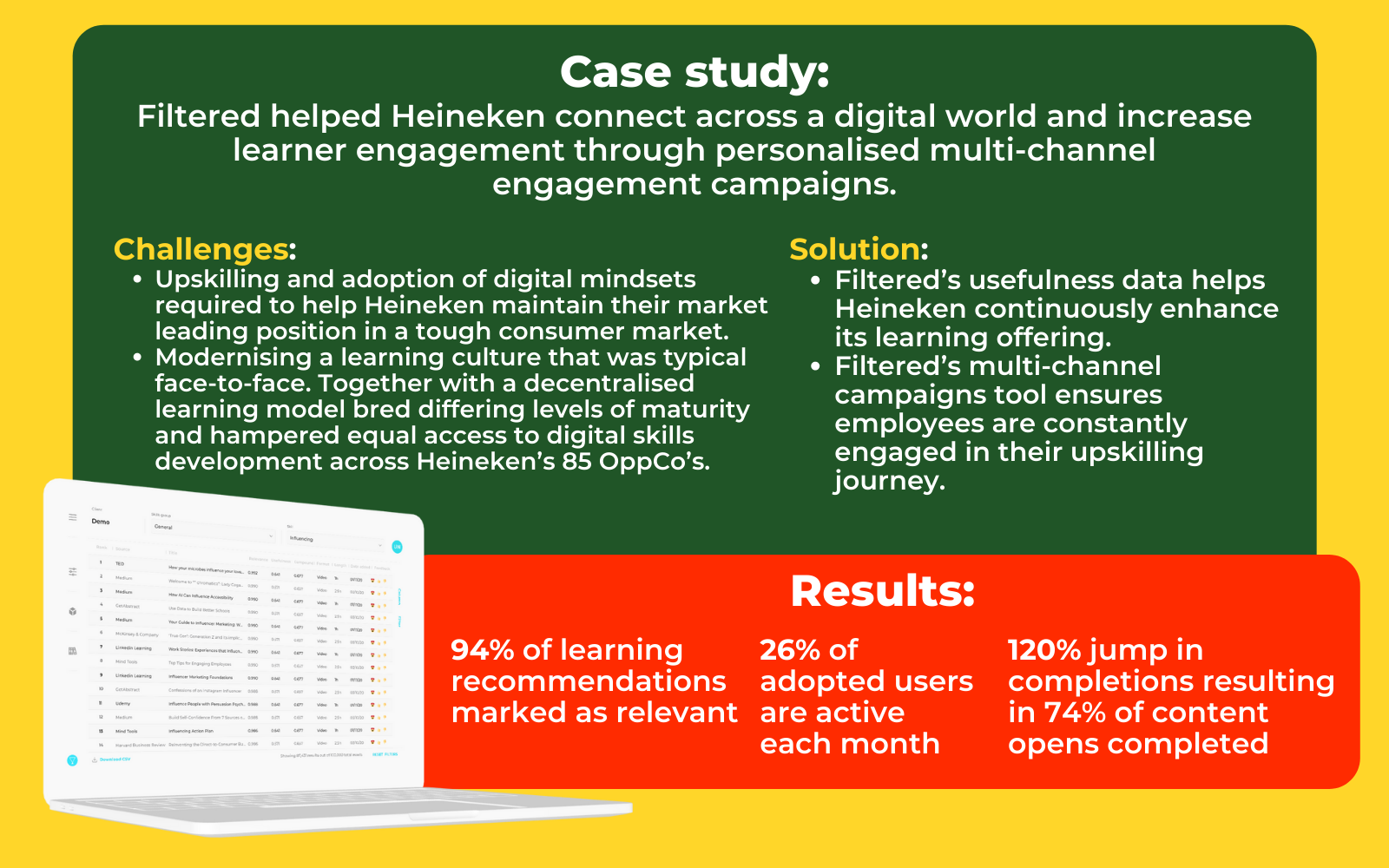

Engagement and campaigns

Build it and they... don’t necessarily come. Your learning platform is not the most important place in your colleague’s work life, whatever the costs, the features, the implementation and integration efforts. And even if you’ve bought and curated the very best content for your organisation.

All our experience in L&D and the evidence from media consumption generally points to intelligent nudges being a crucial and the most important means of maintaining attention and return users. Right content plays a crucial role here; you need to nudge your users to something they will like and care about.

Engagement is essential (for lots of reasons, including being able to properly evaluate the worth of your content to your audience - see section below) but it’s beyond the scope of this piece to say more. Here are ten ways to boost it.

Engagement is essential (for lots of reasons, including being able to properly evaluate the worth of your content to your audience - see section below) but it’s beyond the scope of this piece to say more. Here are ten ways to boost it.

Building a meritocratic learning culture

Evaluate everything, promote the good, retire the bad

You’re up and running and learners are learning. What does good look like? How do you evaluate content?

We make a balanced scorecard assessment. We look at a range of measures which all contribute to potential conclusions. Those are the obvious clicks and completes. For us, in our LXP, we specifically ascertain whether users found the content useful or not - that’s built right into the UI. But ideally you go further downstream by running automated surveys of content to find whether it’s been applied at work and whether it’s contributed to business outcomes, including free text and long-form anecdotes. Data capture will inevitably diminish as you strive for ultimate business impact. We all know that. So get numbers at the start (clicks and completes), nuance towards the end and combine the two perspectives. By adopting an approach like this you can generate a report (we use Tableau) which ranks individual pieces of content and aggregates up to rank providers according to that metric.

On a regular basis (we run these quarterly), you should discuss that data in order to retire or promote libraries and individual pieces of content. The discussions should also include any other cuts of the data (see the multiple lists in this article, for example) to draw out insights your organisation needs.

This data and these review meetings also form the basis for the decision-making process at the end of the arrangement with your supplier, be that a pilot or a three-year subscription. And so the cycle of renewal and procurement decision-making begins again. Only this time you’ll have far more data and evidence to draw on.

Develop your own philosophy

You should have a learning philosophy for your organisation. And you should have one for your own career. If you have a philosophy, all the decisions along the path to right content become easier.

A dominant philosophy in this industry has been that everything should be in one place. To be learner-centric there must be unlimited convenience for learners and what is more convenient than everything in one place, like Amazon, Walmart or the internet? This is compelling and has been highly effective.

As you may have gathered from this article - or just from our company name! - our philosophy is diametrically opposed to this. We think that not all content is useful. Indeed, that most content is not useful. So we’re single-minded in our attempt to get clients to a smaller, punchier inventory. In this world, relevance and quality are higher. In this world, we get more usage data for each and every asset so they can be benchmarked and treated accordingly. In this world, we’re able to cultivate a familiarity with the content - water cooler moments. And in this world, we can bring back the lustre which has been sadly lacking from learning. By making learning easy to access, deeply relevant and just enough – we elevate the learning experience in such a way as to inspire genuine learner ownership, individual and peer-to-peer – the nirvana of a positive learning culture.

There are multiple takes on a less-is-more, filtered philosophy - which we invite you to explore with us or on your own. There are many clues in this article, most explicitly in the lists of considerations for content. But take it further, yourself. Do you want to inspire? Do you want to be positive? Or would you prefer to show an unvarnished reality? Or is it all about user choice and discoverability? Does your position resonate enough with the values and priorities of your employer? If you can develop your own philosophy about the right learning content, you’ll do your job better, make your life easier and enhance your own career and personal brand.

There are multiple takes on a less-is-more, filtered philosophy - which we invite you to explore with us or on your own. There are many clues in this article, most explicitly in the lists of considerations for content. But take it further, yourself. Do you want to inspire? Do you want to be positive? Or would you prefer to show an unvarnished reality? Or is it all about user choice and discoverability? Does your position resonate enough with the values and priorities of your employer? If you can develop your own philosophy about the right learning content, you’ll do your job better, make your life easier and enhance your own career and personal brand.

Another philosophy would be via a true, grounded opinion on algorithms, AI and ML, as they relate to learning. These concepts are recited far too readily by L&D marketing departments. Like content, ‘AI-powered’ has become a commodity, at least in marketing copy. But seeing AI in operation in high fidelity and understanding its application and benefits tangibly is a worthwhile objective in 2021, for your organisation and your career. Few people can do this. You should be able to peer into the black box and maybe even influence algorithm design if you work with a vendor that truly knows (and owns) its software.

Getting the right content for your organisation is not the most glamorous charge but it may be the most important. Until now, the data, technology and thinking was not available to make great, granular decisions on this. It is now. Be sure to make good use of it, for your organisation, your colleagues and your career.

Finally, don’t forget, there's magic in learning. The magic is not the asset or the platform or even the learner. It’s the felt experience of learning, when that ineffable moment of resonance or inspiration or reassurance or clarity occurs. That’s the asset and the person and the medium coalescing in a way that the world’s philosophers and physicists still can’t work out. What is magic, if not that? We only need a little of that to spark learning joy, and work and live happily ever after. It all starts with the right content.

- Marc Zao-Sanders, Filtered CEO