It’s a big question. It was put to us by Richard Caswell at Costa Coffee. And it’s important. So in this article, I explain three ways that Filtered delivers measurable return on investment.

But first, why? You might argue that all learning is good and that investments in learning do not need to yield ROI to be valuable. I’m also inclined to agree with that whenever I can.

However, all spending decisions have consequences. Misspent money could be better spent elsewhere (Donald Clark reflects on the money the UK spent on LearnDirect here).

Like it or not, we have a responsibility to both hypothesize and prove the ROI from any learning programmes and solutions that require substantial investment.

The problem with ROI & learning is that learning itself is too nebulous or disputed to measure. You can only measure outputs from learning: test scores or actual performance.

In organisations (and in life) it’s hard to disentangle these outputs from their contexts. I might argue my training programme delivered a million dollar increase in sales. You could counter that marketing spend delivered that increase. We end up arguing about interacting variables.

Digital investments are also prone to deliver dramatic failures of ROI because they often rely on engagement. Skillsoft was kicked out of New Brunswick when a deployment that cost its government £470,000 reached only 1,000 citizens out of a target of 20,000.

Yikes. So, as Richard put it to me....How does Filtered pay for itself?

There are three main sources of ROI from Filtered which are derived directly from the use cases we currently see in buyers and renewers. They range from the most obvious, immediate & tactical (for smaller organisations - a few thousand or fewer) to the harder, more strategically important measures (for organisations with many thousands of staff).

1) Replaces paid course libraries with short resources people actually use

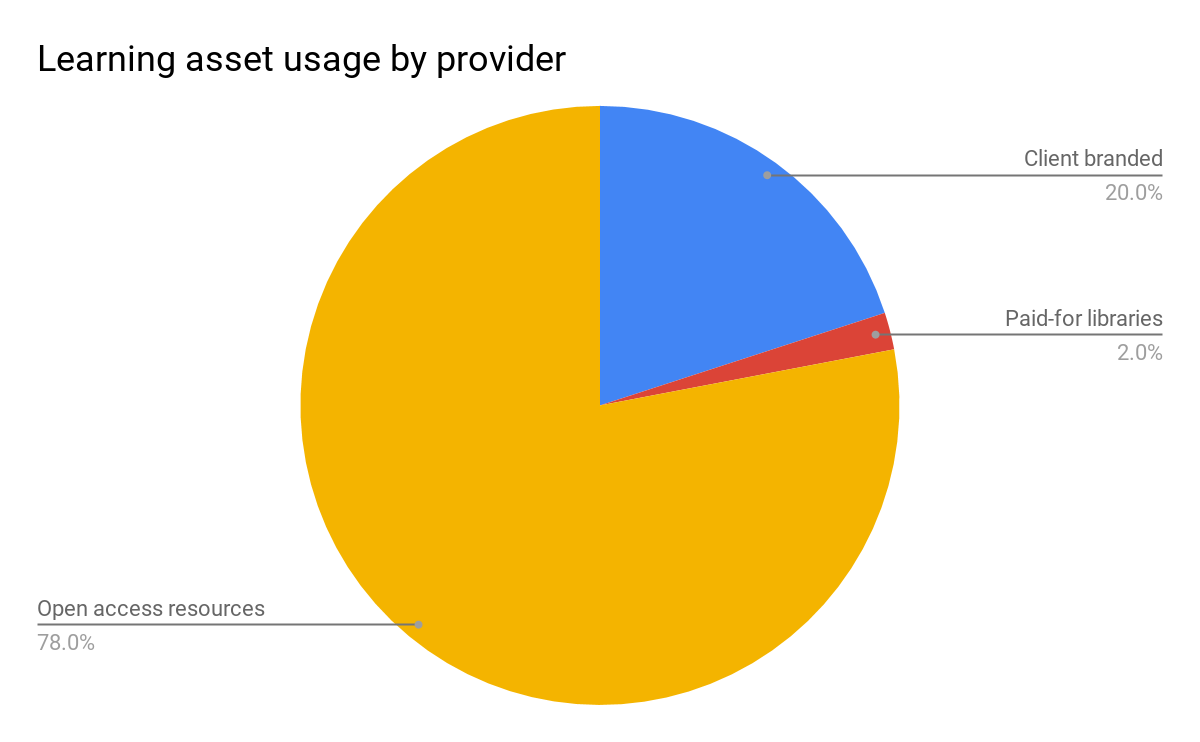

We've found Filtered delivers a situation where the most popular resources by provider are: 1) internally created or branded, 2) high quality, open access providers, such as School of Life, Medium, Wikihow, and 3) paid-for libraries. Here is how usage breaks down at one representative client by provider (as indicated by branding): client-branded assets: 20%, open access providers: 78%, external content libraries: 2%. Pathgather (now Degreed) have published similar findings about the relatively low usage of external libraries:

Bottom line: You don't need to spend £100k+ on third-party libraries when you have Filtered highlighting the most relevant assets from your own material and bringing in the best free stuff from the web to fill any gaps. This has driven many initial purchases and now it’s driving our high renewal rate. (It's also a great example of how Filtered enables evidence-based, data-driven decisions.)

2) Saves time by dealing with the long tail of training recommendation requests

How many times does your L&D team recommend the same course on business writing or on Excel every year? Filtered's chatbot can handle this sort of request. Put that course in Filtered with all the others and redirect all enquiries to Filtered.

Let's say each request takes your L&D manager 20 minutes to deal with and let's say the average fully-loaded cost of dealing with that request (with all the staff involved and associated overheads) is £50. If 2000 employees make on average three learning requests a year through their managers to L&D, and Filtered can deal with just half of them, that's a cost of ~£150k. More than that, it’s mind-numbing work: precisely the kind of thing that should be automated so your L&D can focus on higher-value, more interesting, more human challenges.

One client is a team of two people serving a global audience of 2,000+ employees. They are using Filtered to help them achieve exactly this sort of scalability.

Bottom line: As a discovery solution that actually works - ie makes good recommendations without relying on keyword searches - Filtered takes the pressure off L&D managers besieged by requests for learning recommendations. We have projects with major multinationals roughly on this basis: putting the learning ecosystem they’ve already built or paid for to work.

3) Reveals what skills to invest in to deliver improvement and therefore gains

We commissioned research on the cost of low skills and, looking at the Employer Skills Survey, our economists found that even a 250+ organisation is losing £80 million a year because of skills gaps. An example of a key missing skill leading to losses is bad writing skills losing deals. Elsewhere, we proposed a straightforward way to measure the impact of training in a skill (such as Excel) that employees use regularly. An accountant getting 5% better at Excel is worth a lot in increased productivity.

Any solution that can effectively address skills development rather than wasting user time is worth a lot to your business if you understand and value the relationship between skills and profits.

But you can’t invest in skills development if you don’t know the specific focus areas for your business. In his article, Donald points out how LearnDirect became ‘a remedial plaster for the failure of schools on literacy and numeracy’ rather than a strong vocational alternative to HE. A lot of corporate skills investment is like this: reactive, political, remedial.

Filtered allows you to understand the learning needs that really exist amongst your people. Not in general or derived from their keyword searches, but through the specific lens of user activity against your skills framework.

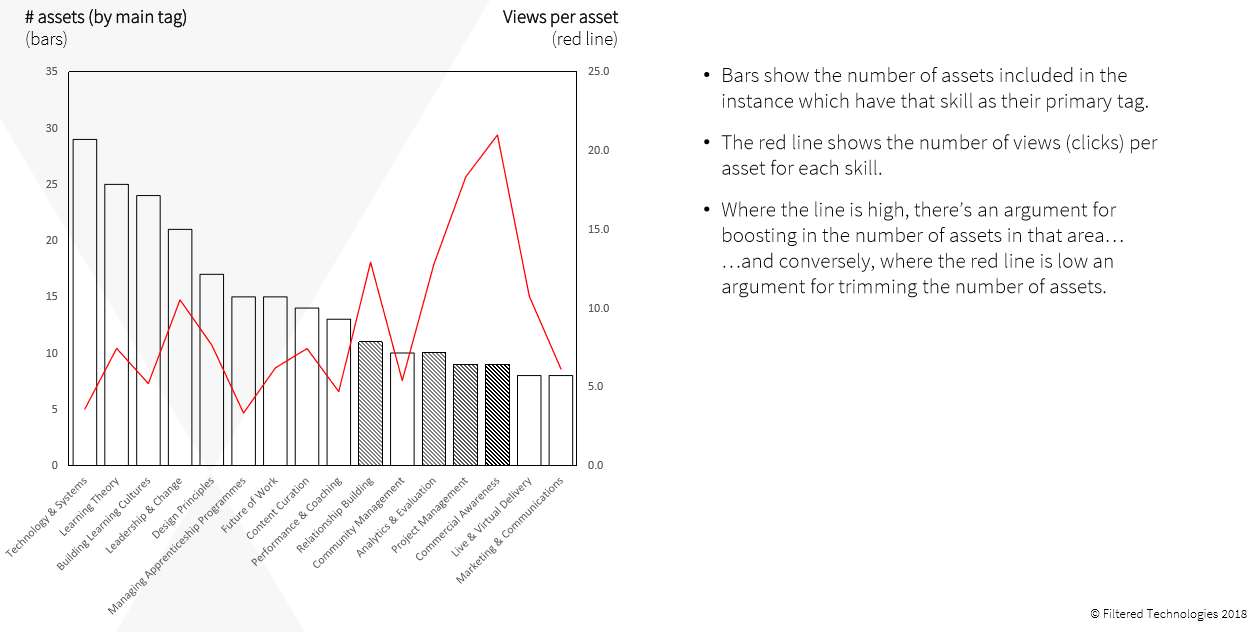

Here’s what the picture looks like for the version of Filtered we built for L&D:

You can see the errors in our provisioning clearly.

The appetite for learning by L&D professionals in the areas of ‘analytics and evaluation’, ‘project management’, ‘commercial awareness’ clearly outstrips supply. On the other hand, our assumption that ‘technology and systems’, ‘learning theory’ or ‘building learning cultures’ were strong requirements for L&D professionals meriting more content has been disproven.

Bottom line: Skills gaps cost organisations millions. Filtered helps you address them better. It’s not a traditional training needs analysis because it reveals active learning preferences vs your provision rather than having people self-identify weaknesses. However, since learning is effortful, active engagement is critical. When you’re spending millions on training every year, understanding the real focus areas of your people is worth an awful lot.